Introduction

Building AI systems in 2026 has moved beyond conversational chatbots into increasingly autonomous agentic systems capable of planning, tool orchestration, memory management and long running task execution.

However, the term “AI agent” now spans an enormous range of architectures: from simple prompt driven tool callers to sophisticated stateful runtimes. To reason about these systems more precisely this article uses the term AI Worker to describe a production-grade autonomous execution system composed of orchestration, planning, memory, tool execution and stability mechanisms.

This architectural evolution did not emerge all at once. Each generation of agent design solved a specific systems problem: hallucinated reasoning, sequential bottlenecks, planner fragility, stateless execution and runtime instability. This article traces that progression, from reactive loops to deterministic compilation through the engineering pressures that forced each shift.

The AI Worker Architecture

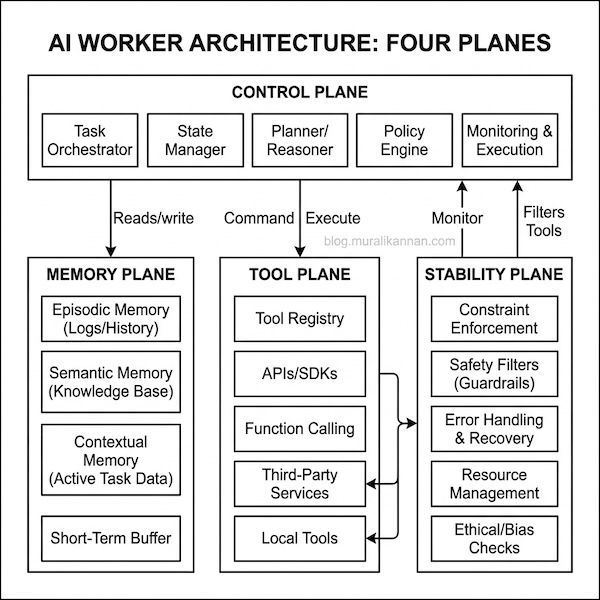

To move beyond simple wrappers, we must treat agents as distributed systems. We define the AI Worker through four distinct operational planes:

The 4 Plane Architecture of a Production AI Worker.

The 4 Plane Architecture of a Production AI Worker.

These planes represent logical operational boundaries, not necessarily separate deployable services or physical isolation domains.

- The Control Plane: The “Brain.” Responsible for high-level reasoning, task decomposition (planning) and state management.

- The Tool Plane: The “Hands.” A registry of external affordances (APIs, DBs, SDKs) and the invocation logic required to execute them.

- The Memory Plane: The “Experience.” Stores short-term session context and long-term episodic reflections. Memory management is a complex topic we will explore in a separate deep dive.

- The Stability Plane: The “Guardrails.” Enforces budgetary limits, safety filters and consistency checks to ensure the worker remains reliable.

Reasoning and Action Loops

Early attempts at multi step reasoning used Chain-of-Thought (CoT). While effective for logic CoT is closed-loop and relies entirely on the model’s internal weights. If a model lacks a specific fact it simply hallucinates a plausible sounding path leading to catastrophic failure in production.

The ReAct (Reasoning + Acting) framework solved this hallucination gap by introducing an interleaved execution model: Thought → Action → Observation.

The E-Commerce Support Bot

Consider an e-commerce support bot tasked with resolving a refund eligibility request for a delayed order. To provide an accurate answer the system must interleave its internal reasoning with live data lookups:

Question: "My order #123 hasn't arrived, and I want a refund."

Thought: I need to find the status of order #123 first.

Action: check_order_status(id="123")

Observation: Status: "Delayed", Arrival: "May 15th".

Thought: The order is significantly delayed. Now I need to check the refund policy for delays.

Action: query_policy(topic="refunds for delayed orders")

Observation: Policy: "Customers are eligible for a full refund if delay exceeds 7 days."

Thought: The delay is 14 days, which exceeds the 7-day limit. I can offer a refund.

Final Answer: "Your order is delayed by 14 days. Based on our policy, you are eligible for a full refund. Would you like me to process it?"

This trajectory demonstrates how the agent uses Thoughts to maintain a mental model and Actions to fetch ground truth data. Each observation updates the next thought preventing the model from hallucinating a status or policy it doesn’t actually have access to.

However, ReAct is highly sequential. Every tool call requires a roundtrip to the LLM leading to high latency and cost. This sequential bottleneck is the primary driver for the next evolutionary step.

Planning for Efficiency and Speed

In complex workflows waiting for every observation before the next thought is inefficient. Reasoning becomes expensive when coupled directly to execution. If an agent needs to gather data from five sources it should know that upfront not discover them one by one.

ReWOO (Reasoning Without Observation) and Plan-and-Solve decouple reasoning from observation by treating planning as a reusable artifact. The planner generates a full Blueprint of tasks with placeholders allowing workers to execute tools in parallel where dependencies allow.

The Financial Research Analyst

Imagine an agent tasked with comparing the annual revenue of five different companies to find the fastest grower. In a sequential ReAct loop the agent would wait for Company A’s data before even realizing it needed Company B. A ReWOO blueprint solves this by mapping the entire dependency graph upfront:

Blueprint:

1. Fetch 2025 Revenue for 'Company_A' (#E1)

2. Fetch 2025 Revenue for 'Company_B' (#E2)

3. Fetch 2025 Revenue for 'Company_C' (#E3)

4. Compare values in #E1, #E2, and #E3 (#E4)

5. Synthesize report from #E4

By separating the Plan from the Execution the system identifies that the first three tasks are independent. These API calls are dispatched to parallel workers simultaneously significantly reducing the Time to First Byte for the final report. While incredibly efficient this pattern can struggle with dynamic branching where the result of one step completely changes the path required for the next.

Turning Plans into Safe Actions

Even with explicit plans LLMs often generate invalid paths: calling non existent tools or providing wrong types. This fragility is unacceptable for production-grade orchestration.

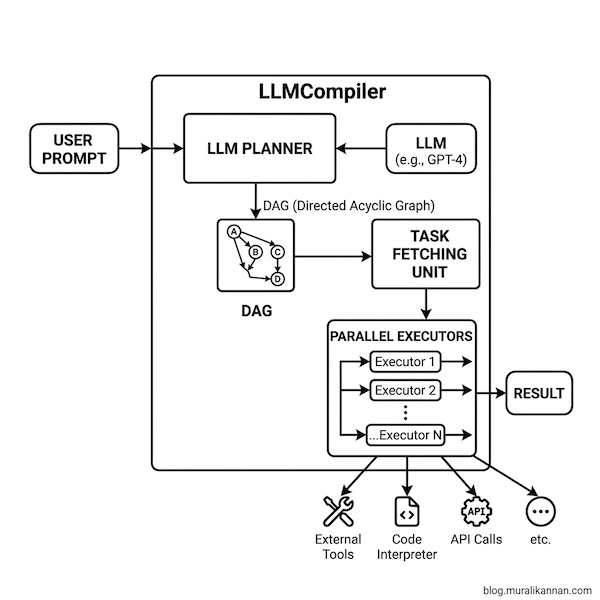

Architectures like LLMCompiler and PlanCompiler introduced a compilation layer. This transforms the planner from an unrestricted text generator into a constrained orchestration compiler. The LLM generates a high level symbolic plan, which the compiler validates and translates into a type safe Directed Acyclic Graph (DAG) of function calls.

The Compilation Flow. A user prompt is converted into a DAG. A Task Fetching Unit dispatches ready nodes to Parallel Executors ensuring validated execution.

The Compilation Flow. A user prompt is converted into a DAG. A Task Fetching Unit dispatches ready nodes to Parallel Executors ensuring validated execution.

The Cloud Migration Engineer

A DevOps agent migrating a suite of microservices must scan Dockerfiles check internal dependencies and build new images. A symbolic compiler treats these instructions as a structured DAG to ensure build safety:

{

"tasks": [

{"id": 1, "action": "scan_service_A", "deps": []},

{"id": 2, "action": "scan_service_B", "deps": []},

{"id": 3, "action": "build_image", "args": ["#E1", "#E2"], "deps": [1, 2]}

]

}

Tasks 1 and 2 are dispatched to a parallel execution pool immediately. Task 3 is held in a queue until its dependencies are resolved. This deterministic approach ensures that the build step never starts with incomplete or hallucinated data providing near-100% first-pass success at the cost of requiring a strict tool registry.

How Agents Learn and Build Skills

Most agents repeat the same mistakes across sessions because context windows are ephemeral. A production worker must treat its history as a dataset for improvement. Reflexion and Voyager introduced learning loops to solve this static agent problem.

In these architectures agents analyze their own failures and store verbal reflections in episodic memory. Successful procedures are saved as reusable Skills in a Vector Database allowing the system to improve over time without constant re reasoning.

The Smart Home Orchestrator (Voyager Pattern)

When a user asks a home agent to “start the morning routine” (coffee, thermostat to 20 degrees, geyser at 50 degrees) a Voyager style system treats this sequence as an executable skill:

# New Skill Saved: morning_routine.py

def run_morning_routine():

iot.start_device("coffee_maker")

iot.set_level("thermostat", 20)

iot.set_level("geyser", 50)

The system retrieves and executes the Python function directly ensuring the behavior is identical and durable. This introduces significant Memory Plane complexity making retrieval relevance and stale skill management a critical runtime concern.

The Automated QA Engineer

Consider an agent tasked with writing a Python function to calculate compound interest and its corresponding unit test. In a Reflexion loop the agent learns from its own execution failures:

Actor (Attempt 1): Writes `def interest(p, r, t): return p * (r**t)`

Evaluator: Fails. `interest(100, 0.05, 2)` returned 0.25, expected 110.25.

Reflector: "I missed the principal multiplier and the 1+r base. The formula should be p * (1+r)**t."

Actor (Attempt 2): Writes `def interest(p, r, t): return p * (1+r)**t`

Instead of “guessing” a fix the agent creates a linguistic summary of the mistake. This reflection is stored in episodic memory providing a lesson learned that guides the next attempt toward a correct solution.

Building Reliable and Durable Systems

Even the best models have biased reasoning. In production the problem shifts from “Can the model reason?" to “Can the system recover safely when reasoning fails?" Modern stability architectures treat agents as long running, stateful workflows with durable execution.

By using Multi Agent Debate, multiple agents challenge a judgment before it is finalized. Checkpointing ensures state is saved at every node allowing a worker to resume from its last known state after a crash.

The High Stakes Legal Advisor

In high risk domains like legal research hallucination is a critical failure. A Multi Agent Debate architecture hardens the output by forcing consensus between conflicting perspectives:

Agent A (Counsel): "Based on Case X the defendant is liable."

Agent B (Analyst): "Wait, Case X was overturned in 2023 by Case Y."

Agent A (Counsel): "Correct. Under Case Y the liability depends on the 'good faith' clause."

Judge LLM: "Consensus reached. Answer: Liability depends on 'good faith' per Case Y."

By identifying discrepancies early the system can pause the durable workflow for manual intervention. Stability architectures emerge from the reality that reasoning is stochastic but system recovery must be deterministic. By using stateful orchestration (like LangGraph) we enable partial task recovery and human in the-loop safety.

Conclusion

The evolution of agent architectures is a journey from stochastic prompting to deterministic system engineering.

- ReAct grounded reasoning in reality.

- ReWOO solved for latency through decouple planning.

- Compilers solved for reliability through DAG validation.

- Voyager/Reflexion solved for continuity through lifelong learning.

- Durable Orchestration solved for production stability through stateful persistence.

Building a reliable autonomous AI Worker is about designing a system that balances the flexibility of LLM reasoning with the rigor of traditional software engineering.

Citations

- ReAct: Synergizing Reasoning and Acting in LMs (arXiv:2210.03629)

- ReWOO: Decoupling Reasoning from Observations (arXiv:2305.18323)

- Plan-and-Solve: Improving Zero-Shot Chain-of-Thought (arXiv:2305.04091)

- LLMCompiler: An LLM-Based Parallel Function Dispatcher (arXiv:2312.04511)

- PlanCompiler: A Deterministic Compilation Architecture (arXiv:2604.13092)

- Reflexion: Language Agents with Verbal RL (arXiv:2303.11366)

- Voyager: An Open-Ended Embodied Agent (arXiv:2305.16291)